Camera Motion Event Notifications with Locally-Processed AI

In this post I will share how I set up push notifications for my home security camera by integrating Home Assistant with Blue Iris. Motion events from the camera are filtered by locally-processed computer vision so that alerts are only generated by objects such as cars or people. I will also show how I leveraged the AI to generate a static alert feed and motion sensors in Home Assistant.

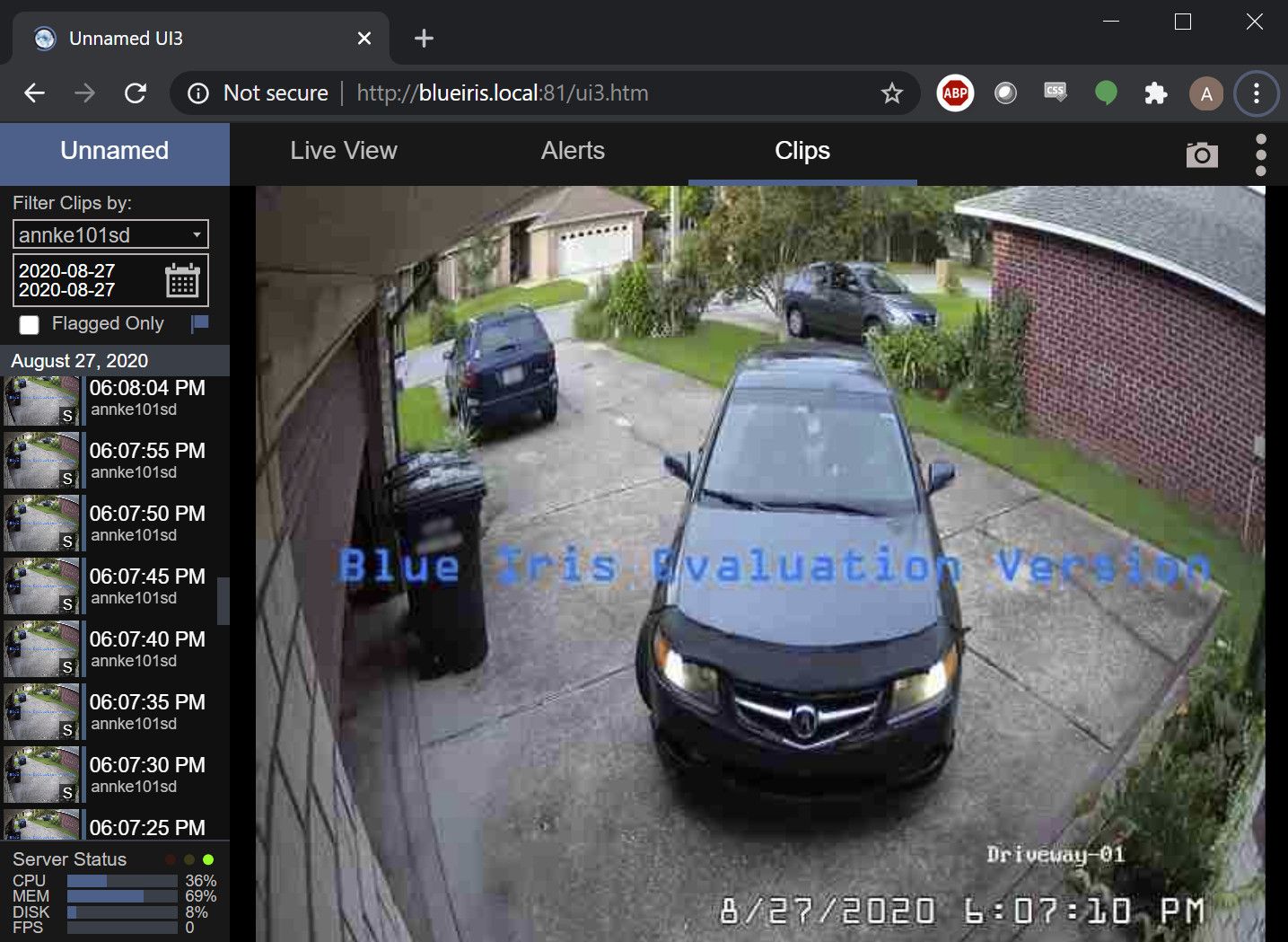

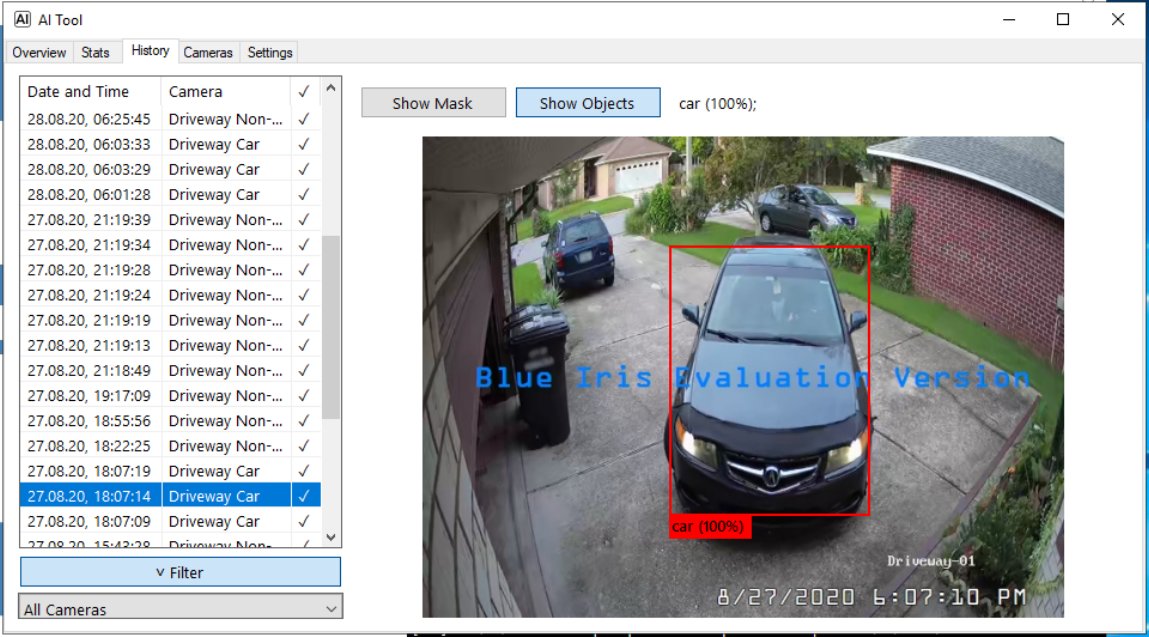

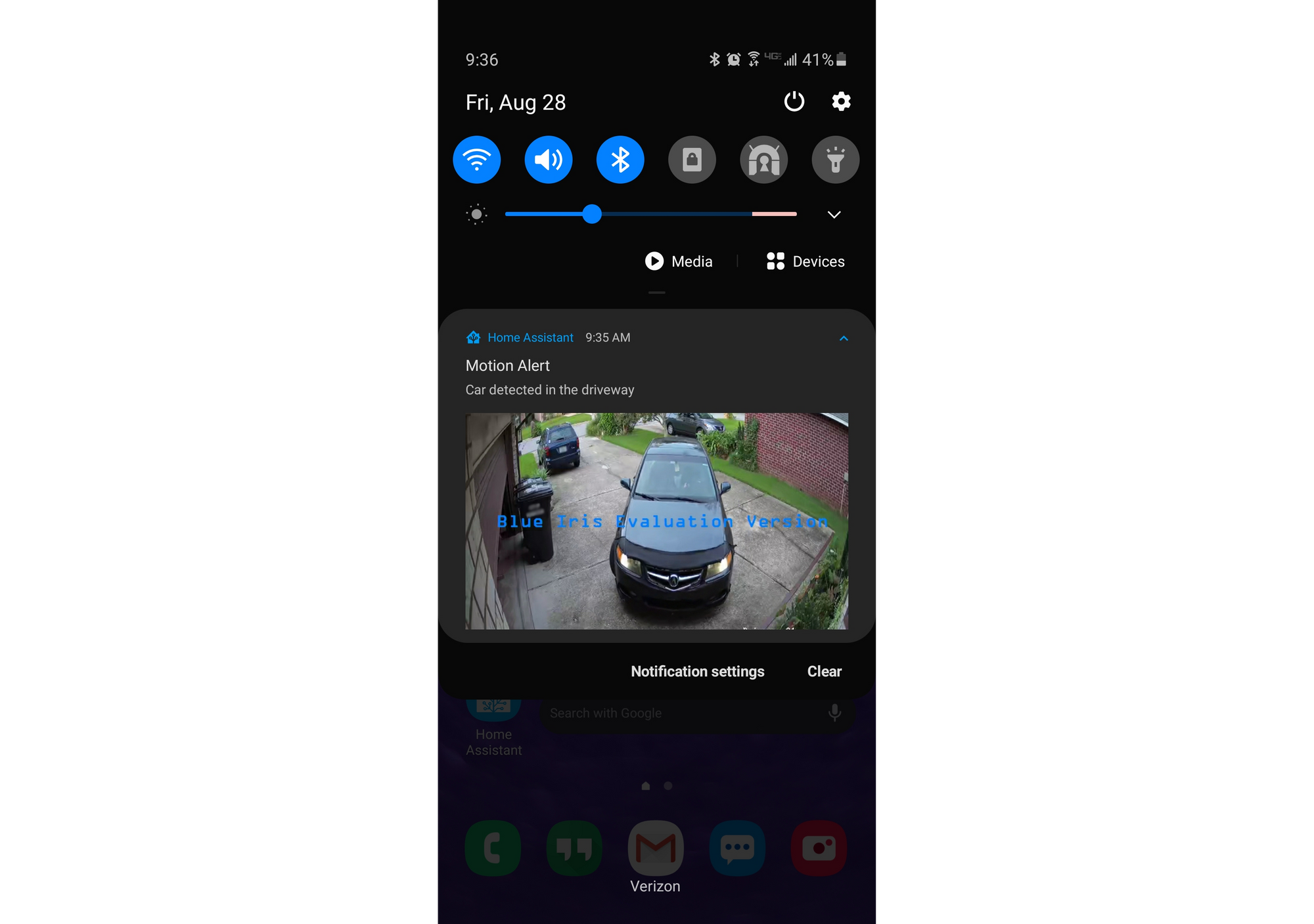

First, a demonstration of what this integration does when a car pulls into the driveway:

1. Car enters the driveway and triggers motion detection in Blue Iris

2. AI Tool checks the motion event for relevant objects

3. The relevant image is published as a push notification by Home Assistant

4. Still-image camera feed in HA is updated

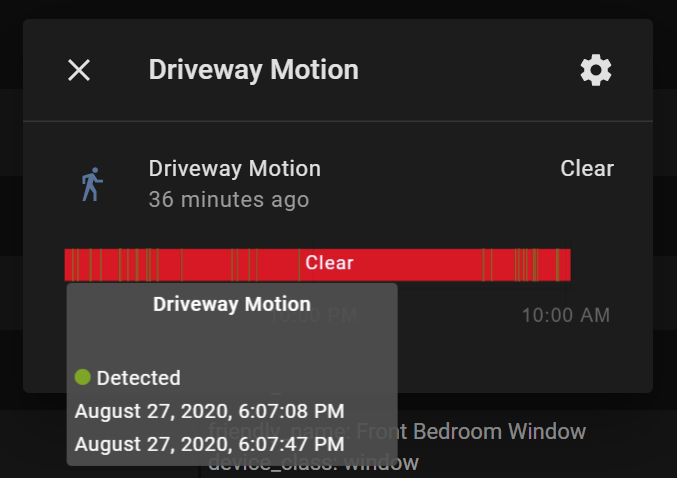

5. Motion Sensor in HA is turned on until the motion stops

Getting Started

First, I recommend watching this very well-made video from The Hook Up. This video was my main inspiration for this build, so major credit to The Hook Up for doing most of the heavy lifting. I won't spend too much of this post repeating what is already in the video, but rather I will focus on what I did to make my setup better.

The integration outlined in the video uses the MQTT feature in Blue Iris to trip a binary sensor in Home Assistant. Once the sensor is working, it is trivial to grab a still frame from the camera feed and publish a notification. The only problem is that by the time the whole sequence is finished, the still frame will be grabbed several seconds after the motion event occurred. As a result, the objects that triggered the alert might already be out of frame by the time the notification is published. I ended up with a bunch of notifications that showed nothing discernable in the frame.

What I really wanted was the exact snapshot that the AI flagged as a relevant alert. The main problem to solve was how exactly to get the images into HA once they were flagged by the tool.

Pushing Alert Images to Home Assistant

The AI Tool from gentlepumpkin has some integration features, but they are limited to HTTP GETs and Telegram messages. The HTTP messages can't post the alert image, so that won't work. The Telegram integration works very well, but that's not a suitable bridge between Blue Iris and Home Assistant because it relies on a cloud-connected service. I prefer to keep everything as local as possible.

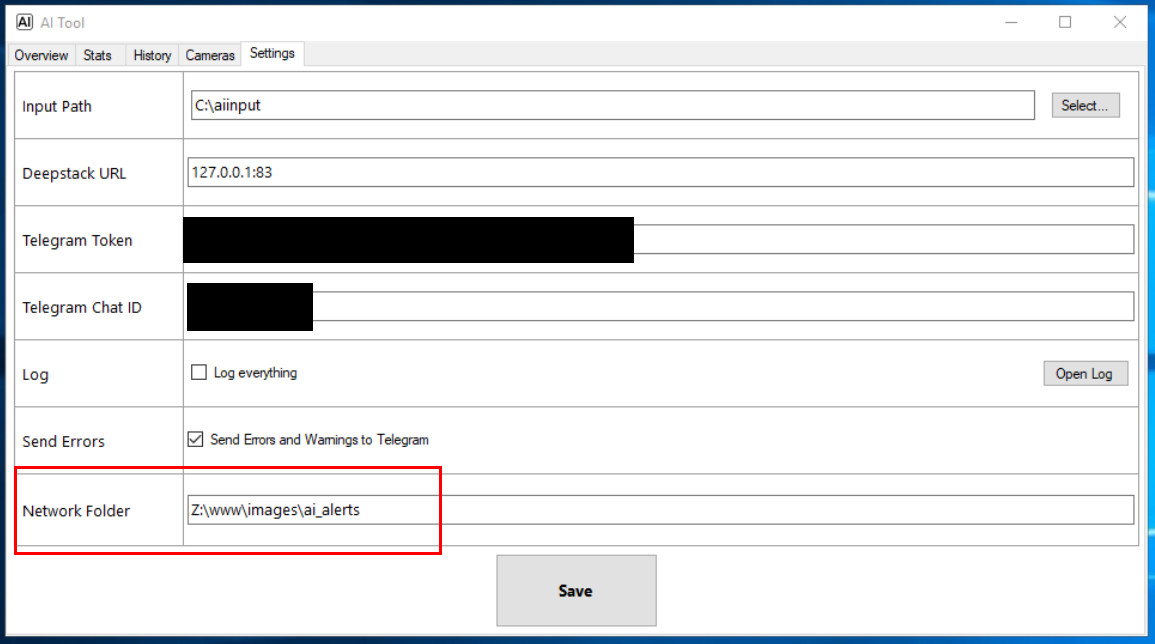

Fortunately, the AI Tool is open source, so I decided to start my own fork to add a new feature: saving to a network path.

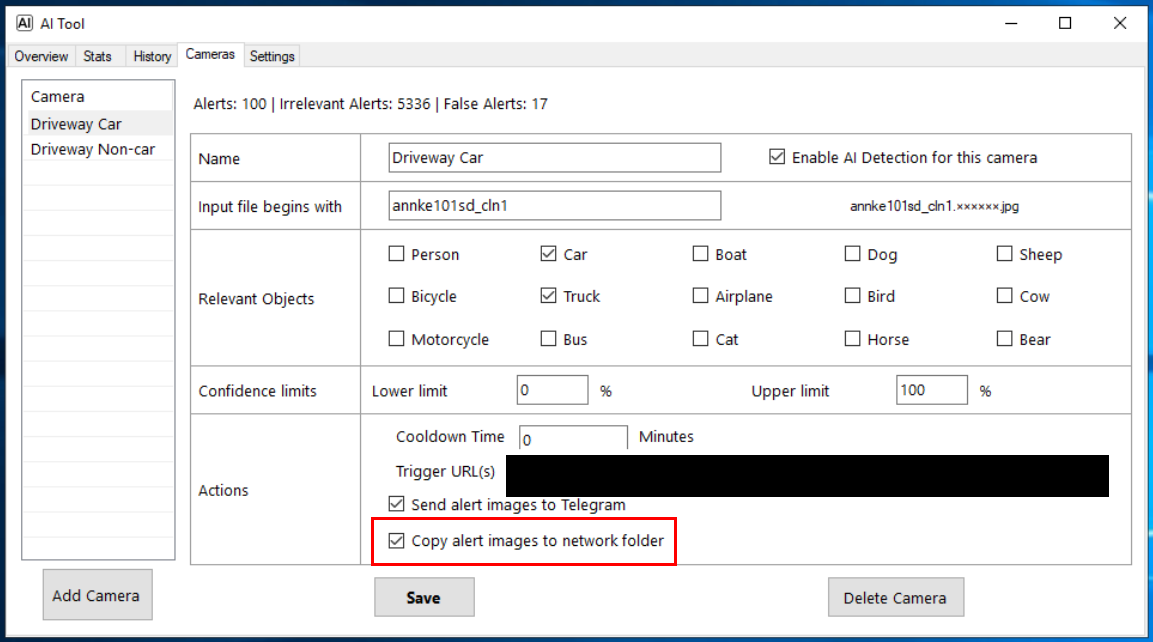

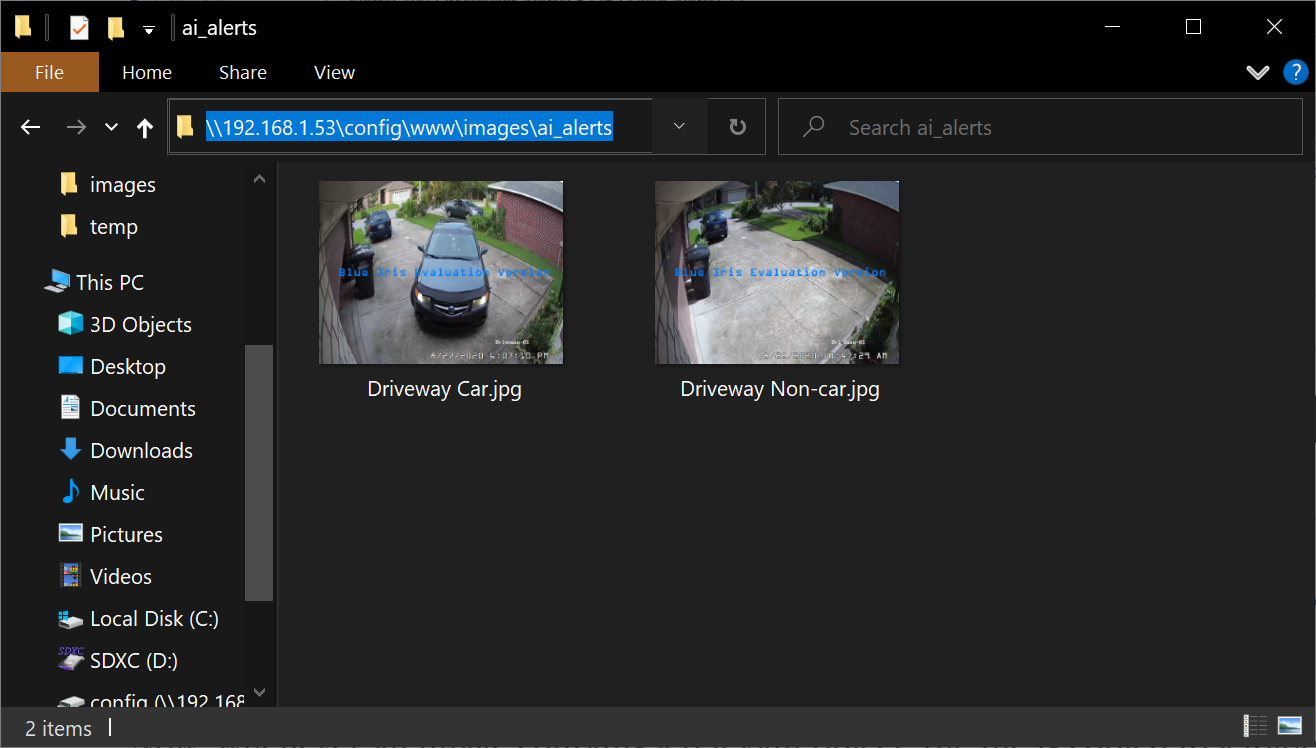

Now, whenever an image contains a relevant object, the file is copied to a folder on the Home Assistant machine. Each configured camera maps onto its own image file in Home Assistant, and that same image is overwritten every time a new alert is published. This way, each camera profile has its own feed in Home Assistant and can have individualized automations. In my case, I have one feed for car events, and one feed for non-car events (e.g. people, bicycles, animals).

Automations

Now, the images are available in Home Assistant, but we need a way to trigger some automations when new alerts come in. I set this up using a Folder Watcher in Home Assistant which fires events whenever one of the image files is modified.

folder_watcher:

- folder: /config/www/images/ai_alerts

patterns:

- '*.jpg'Specifically, this integration pushes messages onto the event bus any time a jpg file in the ai_alerts folder is modified. Next, I hooked into those events using Node-RED.

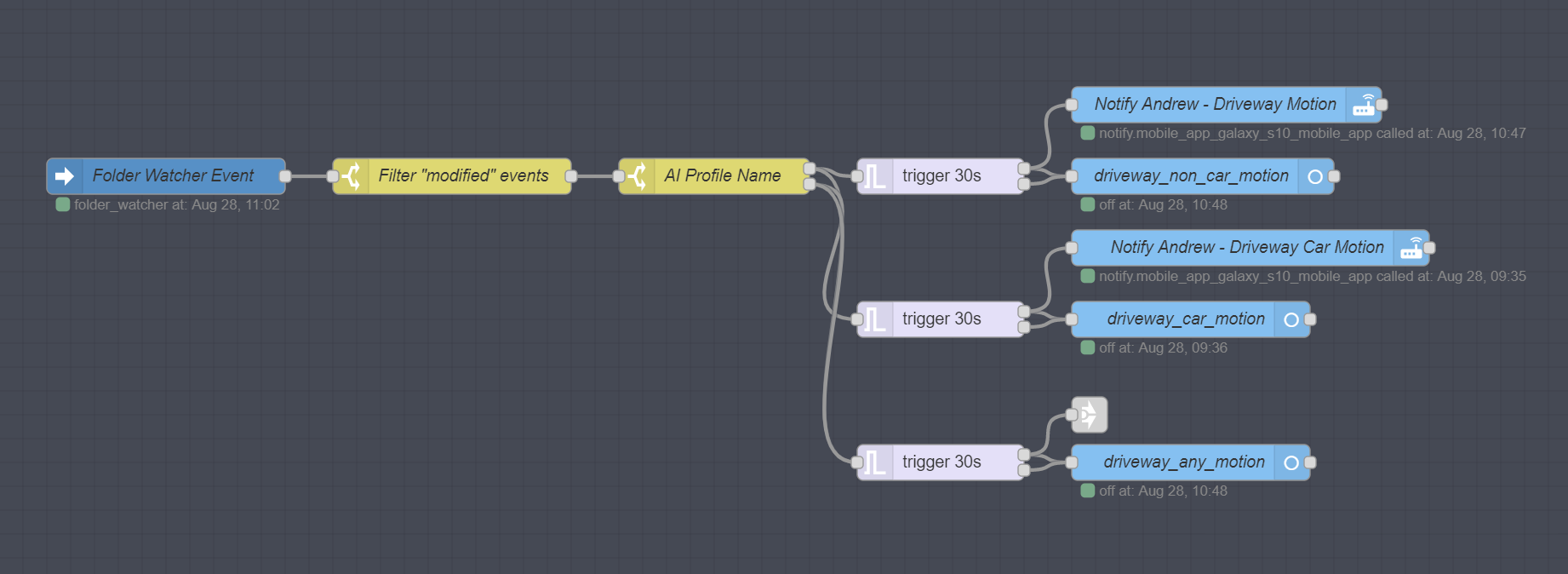

The flow above triggers any time a folder_watcher event is published on the event bus. It filters out only "modified" events, then splits into different paths based on the name of the jpg file. Finally, the flow triggers binary sensors and mobile push notifications that are customized based on the type of event, either car or non-car. There is also a catch-all binary sensor that is set for all motion event types.

If the sensor is not tripped again for 30 seconds, the trigger node will turn the sensor off again. The trigger node also blocks new messages while motion is active, that way you don't get a continuous stream of notifications during the same motion event.

And that's it! Now, whenever AI Tools detects a relevant object, we will get a push notification from Home Assistant with the precise image that contains the object(s). The binary sensors are not actually required, but now they are there so that I can automate anything I want when a car or a person comes onto the driveway.

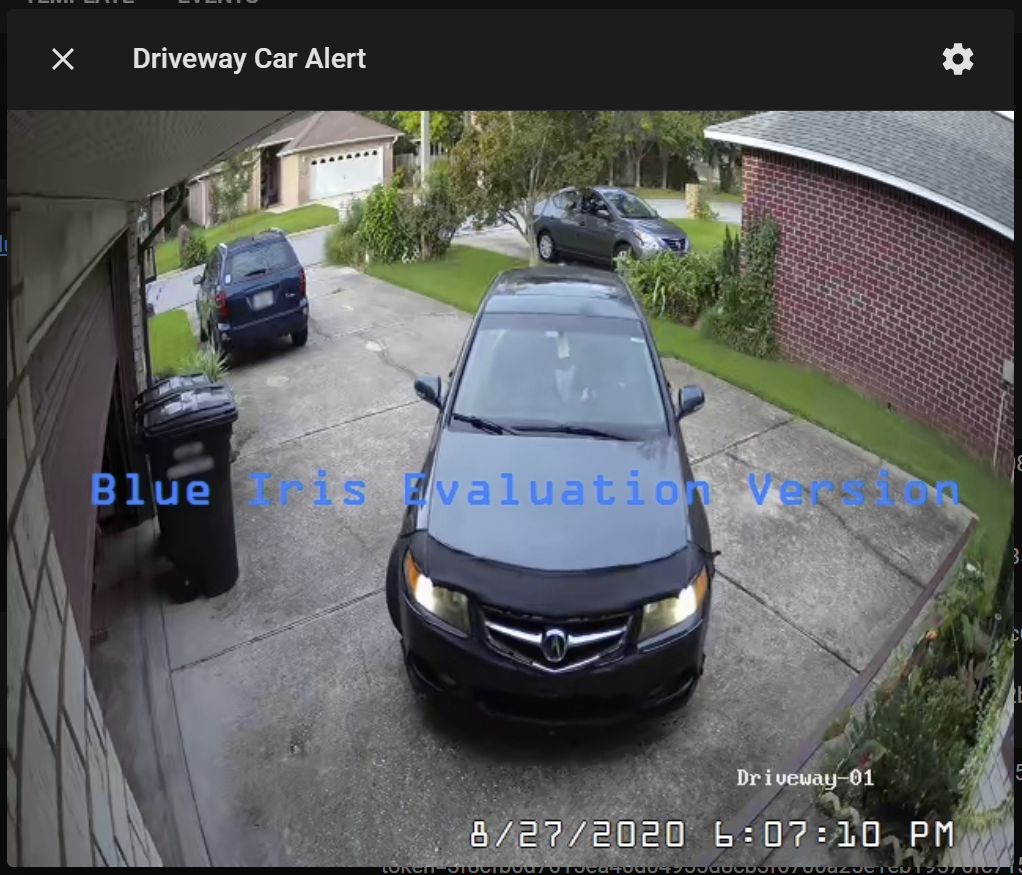

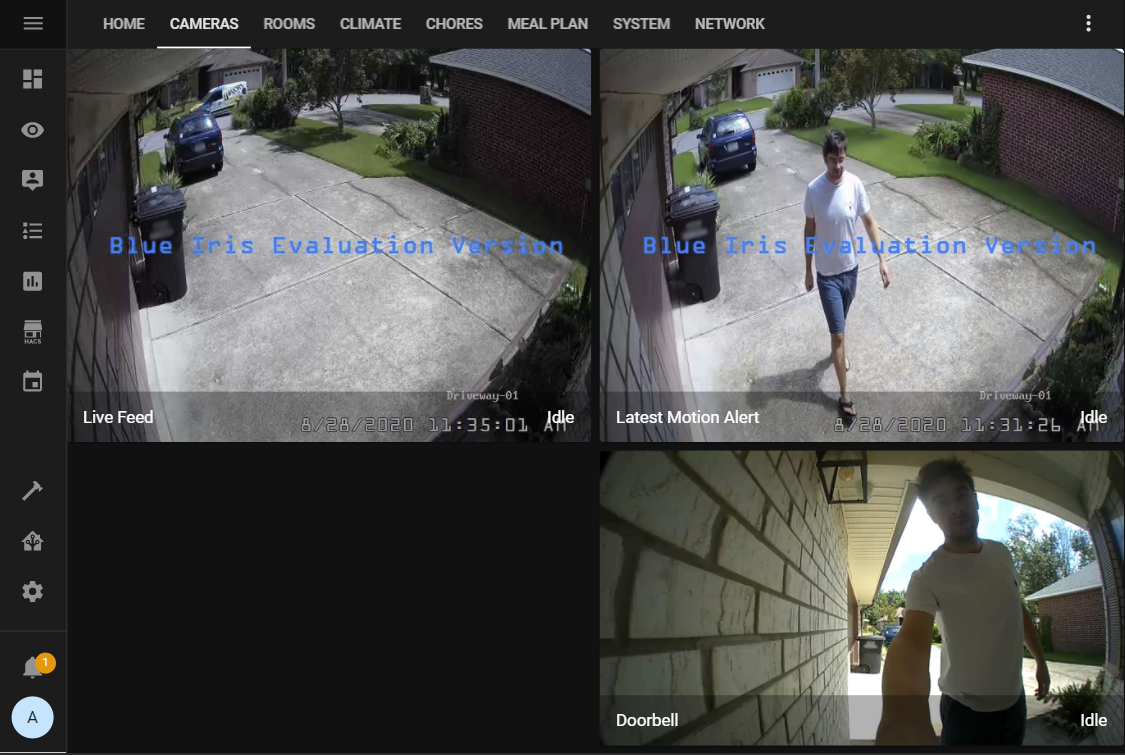

Bonus – Still-Image Alert Feed

Now that the alert images are conveniently stored in the Home Assistant file system, I thought it would be nice to display them in Lovelace so that I can always see the latest motion event on my kiosks or in the mobile app. This is easy to do with the camera integration.

camera:

- platform: generic

still_image_url: 'http://127.0.0.1:8123/local/images/ai_alerts/Driveway Non-car.jpg'

name: Driveway Non-car Alert

- platform: generic

still_image_url: 'http://127.0.0.1:8123/local/images/ai_alerts/Driveway Car.jpg'

name: Driveway Car Alert

- platform: generic

still_image_url: >

{% if (states.binary_sensor.driveway_non_car.last_changed < states.binary_sensor.driveway_car.last_changed) %}

http://127.0.0.1:8123/local/images/ai_alerts/Driveway Car.jpg

{% else %}

http://127.0.0.1:8123/local/images/ai_alerts/Driveway Non-car.jpg

{% endif %}

name: Driveway Last AlertThis configuration will generate 3 "cameras" which always show the latest images pushed to Home Assistant by the AI Tool:

- The Non-car alert feed

- The Car alert feed

- A catch-all to show the latest event based on the timestamps of the binary sensors

Now, when someone approaches the front door and rings the doorbell, my wall-mounted kiosk by the front door automatically turns on and opens the camera feed. Like a high-tech peep hole, it instantly shows the latest AI motion alert, alongside the live feed and doorbell cam.

Thanks for reading!